- Square

- Photosblender Linear Or Square Image Blending 1 1 20

- Photos Blender Linear Or Square Image Blending 1 1 2 2 Tetramethylcyclopropane

A Brief Tutorial On Interpolation for Image Scaling

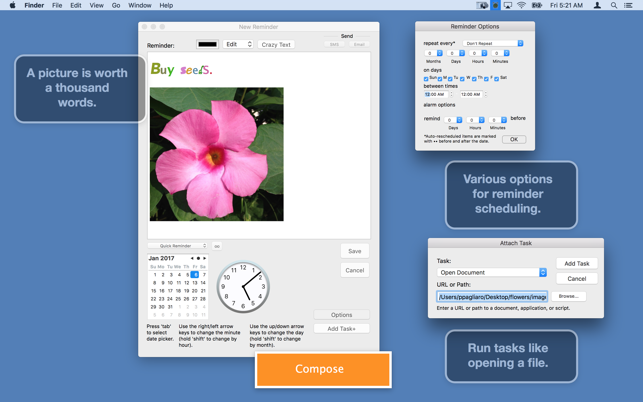

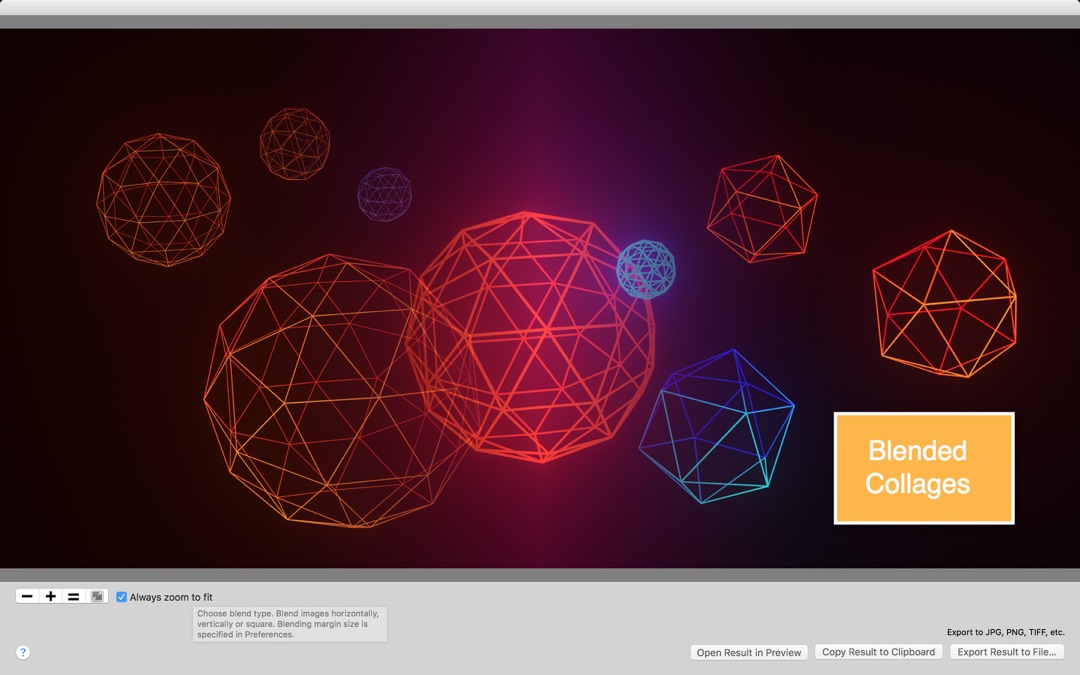

Common Names: Blend, Merge Brief Description. This operator forms a blend of two input images of the same size. Similar to pixel addition, the value of each pixel in the output image is a linear combination of the corresponding pixel values in the input images. The coefficients of the linear combination are user-specified and they define the ratio by which to scale each image before. Blender Models and more; Share, discuss and download blender 3d models of all kinds! Official Blender Model Repository. Download PhotosBlender - Linear or Square Image Blending for macOS 10.10 or later and enjoy it on your Mac. PhotosBlender is an app for combining photos horizontally, vertically or square using gradient blending at the seams where they are joined.

Free Blender 3D models in OBJ, Blend, STL, FBX, Three.JS formats for use in Unity 3D, Blender, Sketchup, Cinema 4D, Unreal, 3DS Max and Maya. 1) Locate the files you want to blend in the Finder, Photos, or your web browser. 2) Drag the files you want to blend into the Blender window's 'photo browser' at the bottom. Drag and Drop the files in the browser to reorder - drag in between each other, or swap them - drag one on top of the other.

Michael Gleicher, 10/12/99

When an image is scaled up to a larger size, there is a questionof what to do with the new spaces in between the original pixels.Here, we briefly examine some of the differences.

Resampling in 1D.

To begin, let's consider the 1D case of a signal (call it f) thatwe would like to dialate (expand in time) by a factor of 2 (call theresulting signal g). This means that

g(t) = f(t/2)

We must consider the problem that these signals are uniformlysampled (at integer values of t). The issue arrises that for somesamples that we would like to have of g (namely the odd integers) donot correspond to a sample of f. As we know from our discussions ofsampling theory, there is no way to know what the signal doesin-between samples.

Theoretically, what we would like to do is construct a continuousrepresentation for f, and then sample that. If we knew that thesignal was sampled properly (e.g. the original signal had nofrequencies higher than 1/2, which is the Nyquist rate for thissampling period), then we could do an 'ideal' reconstruction.

The theoretical process is a good hint at what the 'right' answeris: we should create g such that it has no newer high frequencies. Ofcourse, that answer is only right if the original signal was properlysampled. In practice, the 'right' answer may be a matter of artistictaste.

To look at a specific example, let's consider a simple f that is atriangle wave with sampled values [0 2 4 6 4 2 0 2 4 6 4 20]. This gives us a picture like:

What we'd like to do is double the time, which means that we knowthe even samples.

So we need to know what happens 'in between' these samples. If wehad the original signal that f was sampled from, we could sample thesignal at all of the desired points.

Which of course would have required us to have the original signal(or to at least reconstruct it).

With the numbers, there are two obvious choices:

- Simply double each sample (e.g. repeat it twice). So that our original signal will become [0 0 2 2 4 4 6 6 4 4 2 2 0 0 2 2 4 4 6 6 4 4 2 2 0 0].

- Make the new samples half way between the previous samples [0 1 2 3 4 5 6 5 4 3 2 1 0 1 2 3 4 5 6 5 4 3 2 1 0]. As it turns out, this is exactly the right answer for the picture drawn above. However, this is a fortunate coincident.

The first version we call value replication (or pixel replication)in the case of images. The second is interpolation.

Pixel replication is sometimes called 'nearest neighbor' becauseit picks the sample closest to the value we're looking for. In thecase of doubling the image size, we chose to pick the lower value inthe case of a tie (e.g. when we look for a value for time = 1.5, wepick sample 1). For an example where this makes a difference considertripling the time. In this case, we would want to look for timevalues of 1 1/3 and 1 2/3, which are closest to 1 and 2respectively.

The halfway method is interpolation. More generally, we blend thenearby samples. The simple way to do this is to draw a line betweenthe two samples and pick the value along the line. This is a simpleform of reconstruction. In equation form, we might say

f(t) = s * f(floor(t)) + (1-s) * f(ceil(t))

where floor is the function that picks the largest integer smallerthan t, ceil is the 'ceiling' function that picks the smallestinteger larger than t, and s is t-floor(t), or the distance between tand the sample. This is linear interpolation since we are fittingstraight lines between the samples.

Now you might wonder which of the two methods described so far is'right'. The answer is 'it depends.' Suppose we have the trianglewave, and we triple the time (rather than just doubling it). We gettwo very different looking results:

for linear interpolation, and

for nearest neighbor. Since in this example, we knew that wewanted a triangle wave, one is clearly better than the other.However, if we just had the sampled signal, it might really have beenthe 'jaggy' stairstep. For example, suppose we have a square wave[0 0 1 1 0 0 1 1 0 0 1 1]. In this case, using linear givesan overly smooth result, while nearest neighbor gives a square wave.Nearest neighbor intepolation gives us a square wave

while linear interpolation gives us something that might be toosmooth.

Bi-Cubic interpolation achieves results between these two choices.It estimates how sharp and edge there should be by estimating thederivatives at each sample and then fitting a cubic curve between thesamples.

Reconstruction Kernels in 1D

Before moving on to 2D, let's consider a slightly non-intuitiveway to implement these methods.

If we take our original samples and 'space them out,' we get achain of spikes. Going back to our original example triangle wave[0 2 4 6 4 2 0 2 4 6 2 0], we would just put in zeros in thelength doubled version, e.g. [0 0 2 0 4 0 6 0 4 0 2 0 0 0 2 0 4 06 0 4 0 2 0]. If we filter this 'spike chain' we then get thereconstruction processes described above. By choosing the correctfilters, we can different types of reconstruction. For example,nearest neighbor interpolation for size doubling can be implementedby the reconstruction kernel [0 1 1]. The linearinterpolation can be implemented by the kernel [.5 1 .5].

For other spacings, we just use other kernels. For example, thenearest neighbor kernel for tripling is [1 1], and the linearinterpolation kernel is 1/3 [1 2 3 2 1]. Other kernels givedifferent reconstructions. For example, we might use the kernel 1/6[1 5 6 5 1].

This implementation has several advantages. One, it gives auniform way to implement lots of different interpolation types. Bychoosing the reconstruction kernels, we can get different types ofresults. Even the bicubic interpolation described above can beimplemented this way. Second, it corresponds more closely with thetheory of signal reconstruction, which makes design of thereconstruction kernels possible. Third, with a uniform method forkernel design, it is easier to extend this method to differentscaling sizes and to 2D.

Scaling in 2D

Everything we said in 1D also applies in 2D. The biggestdifference is that pixels have more neighbors. For example, considerenlarging this image by a factor of 2. I have given some of thepixels 1 character names for future reference.

Notice that pixel e has neighbors A and B, while pixel g has 4neighbors. For the pixels with 2 neighbors, the methods we discussedin 1D apply. For those with 4 neighbors, we need to do somethingdifferent.

The nearest neighbor process has an obvious extension. Linearinterpolation requires an extension into two dimensions. We linearlyinterpolate along each dimension, so the process is called bi-linearintepolation. For the doubling case above, the pixel e would behalfway between A and B (by linear interpolation). Similarly pixelsf, h, and i can be found. Pixel g is then halfway between e and i (orequivalently, between f and h). Since e = 1/2 (A+B), and i=1/2(C+D),g=1/4(A+B+C+D).

These reconstructions can be done using the same spike generationand reconstuction filter kernels as done in 1D, except that thekernels are now 2D. In the above example, the 'spike' image wouldbe:

The reconstruction kernels for pixel doubling is

1 | |

1 |

for nearest neighbor, and

1/4 | 1/2 | 1/4 |

1/2 | 1 | 1/2 |

1/4 | 1/2 | 1/4 |

for linear interpolation.

Note: while the linear interpolation kernel may resemble thebinomial kernels, they are not the same as the sizes grow. Thebinomial kernels are more rounded.

Trying this out

You can try this out using Photoshop or PaintShop Pro. Pick animage,and then use the resize operator to make it bigger. Eachprogram lets you choose between nearest neighbor, bilinear, andbicubic interpolation.

Showing the results here is pretty pointless since the webbrowsers and web image formats can lose the details of images.

The OpenGL ES Registry contains specifications of the core API and shading language; specifications of Khronos- and vendor-approved OpenGL ES extensions; header files corresponding to the specifications; and related documentation.

The OpenGL ES Registry is part of the Combined OpenGL Registry for OpenGL, OpenGL ES, and OpenGL SC, which includes the XML API registry of reserved enumerants and functions.

Table of Contents

- Working Group Policy for when Specifications and extensions will be updated.

- Current OpenGL ES API and Shading Language Specifications and Reference Pages

- Core API and Extension Header Files

- IP Disclosures Potentially Affecting OpenGL ES Implementations

OpenGL ES Core API and Shading Language Specifications and Reference Pages

The current version of OpenGL ES is OpenGL ES 3.2. Specifications for older versions 3.1, 3.0, 2.0, 1.1, and 1.0 are also available below. For additional specifications, headers, and documentation not listed below, see the Khronos.org Developer Pages. Header files not labelled with a revision date include their last update time in comments near the top of the file.

OpenGL ES 3.2 Specifications and Documentation

- OpenGL ES 3.2 Specification (October 22, 2019) without changes marked and with changes marked .

- OpenGL ES Shading Language 3.20 Specification (July 10, 2019) (HTML) (PDF)

- OpenGL ES Quick Reference Card (available for different API versions).

OpenGL ES 3.1 Specifications and Documentation

- OpenGL ES 3.1 Specification (November 3, 2016), without changes marked and with changes marked .

- OpenGL ES Shading Language 3.10 Specification (January 29, 2016) without changes marked and with changes marked .

OpenGL ES 3.0 Specifications and Documentation

- OpenGL ES 3.0.6 Specification (November 1, 2019), without changes marked and with changes marked .

- OpenGL ES Shading Language 3.00 Specification (January 29, 2016).

OpenGL ES 2.0 Specifications and Documentation

- OpenGL ES 2.0 Full Specification , Full Specification with changes marked, Difference Specification (November 2, 2010). A Japanese translation of the specification is also available.

- OpenGL ES Shading Language 1.00 Specification (May 12, 2009).

OpenGL ES 1.1 Specifications and Documentation

- OpenGL ES 1.1 Full Specification and Difference Specification (April 24, 2008).

- OpenGL ES 1.1.03 Extension Pack (July 19, 2005).

OpenGL ES 1.0 Specification and Documentation

- OpenGL ES 1.0.02 Specification .

- gl.h for OpenGL ES 1.0.

- The old OpenGL ES 1.0 and EGL 1.0 Reference Manual is obsolete and has been removed from the Registry. Please use the OpenGL ES 1.1 Online Reference Pages instead.

Square

API and Extension Header Files

Because extensions vary from platform to platform and driver to driver, OpenGL ES segregates headers for each API version into a header for the core API (OpenGL ES 1.0, 1.1, 2.0, 3.0, 3.1 and 3.2) and a separate header defining extension interfaces for that core API. These header files are supplied here for developers and platform vendors. They define interfaces including enumerants, prototypes, and for platforms supporting dynamic runtime extension queries, such as Linux and Microsoft Windows, function pointer typedefs. Please report problems as Issues in the OpenGL-Registry repository.

In addition to the core API and extension headers, there is also an OpenGL ES version-specific platform header file intended to define calling conventions and data types specific to a platform.

Almost all of the headers described below depend on a platform header file common to multiple Khronos APIs called <KHR/khrplatform.h>.

Vendors may include modified versions of any or all of these headers with their OpenGL ES implementations, but in general only the platform-specific OpenGL ES and Khronos headers are likely to be modified by the implementation. This makes it possible for developers to drop in more recently updated versions of the headers obtained here, typically when new extensions are supplied on a platform.

OpenGL ES 3.2 Headers

- <GLES3/gl32.h> OpenGL ES 3.2 Header File.

- <GLES2/gl2ext.h> OpenGL ES Extension Header File (this header is defined to contain all defined extension interfaces for OpenGL ES 2.0 and all later versions, since later versions are backwards-compatible with OpenGL ES 2.0).

- <GLES3/gl3platform.h> OpenGL ES 3.2 Platform-Dependent Macros (this header is shared with OpenGL ES 3.0 and 3.1).

OpenGL ES 3.1 Headers

- <GLES3/gl31.h> OpenGL ES 3.1 Header File.

- <GLES2/gl2ext.h> OpenGL ES Extension Header File.

- <GLES3/gl3platform.h> OpenGL ES 3.1 Platform-Dependent Macros (this header is shared with OpenGL ES 3.0).

OpenGL ES 3.0 Headers

- <GLES3/gl3.h> OpenGL ES 3.0 Header File.

- <GLES2/gl2ext.h> OpenGL ES Extension Header File.

- <GLES3/gl3platform.h> OpenGL ES 3.0 Platform-Dependent Macros.

Photosblender Linear Or Square Image Blending 1 1 20

OpenGL ES 2.0 Headers

- <GLES2/gl2.h> OpenGL ES 2.0 Header File.

- <GLES2/gl2ext.h> OpenGL ES Extension Header File.

- <GLES2/gl2platform.h> OpenGL ES 2.0 Platform-Dependent Macros.

Photos Blender Linear Or Square Image Blending 1 1 2 2 Tetramethylcyclopropane

OpenGL ES 1.1 Headers

- <GLES/gl.h> OpenGL ES 1.1 Header File.

- <GLES/glext.h> OpenGL ES 1.1 Extension Header File.

- <GLES/glplatform.h> OpenGL ES 1.1 Platform-Dependent Macros.

- <GLES/egl.h> EGL Legacy Header File for OpenGL ES 1.1 (August 6, 2008) - requires <EGL/egl.h> from the EGL Registry .

Khronos Shared Platform Header (<KHR/khrplatform.h>)

- The OpenGL ES 3.0, 2.0, and 1.1 headers all depend on the shared <KHR/khrplatform.h> header from the EGL Registry .

Extension Specifications by number

- GL_OES_texture_float_linear

GL_OES_texture_half_float_linear - GL_OES_texture_float

GL_OES_texture_half_float - GL_EXT_multi_draw_arrays

GL_SUN_multi_draw_arrays - GL_ANGLE_texture_compression_dxt3

GL_ANGLE_texture_compression_dxt1

GL_ANGLE_texture_compression_dxt5 - GL_KHR_texture_compression_astc_hdr

GL_KHR_texture_compression_astc_ldr - GL_EXT_shader_framebuffer_fetch

GL_EXT_shader_framebuffer_fetch_non_coherent - GL_NV_blend_equation_advanced

GL_NV_blend_equation_advanced_coherent - GL_KHR_blend_equation_advanced

GL_KHR_blend_equation_advanced_coherent - GL_EXT_geometry_shader

GL_EXT_geometry_point_size - GL_EXT_tessellation_shader

GL_EXT_tessellation_point_size - GL_KHR_context_flush_control

GLX_ARB_context_flush_control

WGL_ARB_context_flush_control - GL_OES_tessellation_shader

GL_OES_tessellation_point_size - GL_EXT_texture_compression_astc_decode_mode

GL_EXT_texture_compression_astc_decode_mode_rgb9e5 - GL_EXT_memory_object

GL_EXT_semaphore - GL_EXT_memory_object_fd

GL_EXT_semaphore_fd - GL_EXT_memory_object_win32

GL_EXT_semaphore_win32